Google Search Algorithm Updates always were, are, and, we suppose, will be something that strikes some fear into the hearts of webmasters who care about their site rankings. Google plays hardball, webmasters play whack-a-mole.

Why is this so when these updates presumably aim to provide the best user experience and results to searchers and to reward websites for high quality content?

Everybody who is engaged in the SEO field knows that Google operates and applies more than 200 ranking factors before letting your website get the highest positions on the SERP. However, before improving each of those 200 ranking factors you are better off to first learn which of them impact a website negatively or positively and then start your work.

It is difficult to consider even half of Google’s ranking factors when you conduct a website’s optimization, and some webmasters don’t even want to do this. Instead they go for black-hat SEO in order to get quick results. Their quick high positions eventually get lost and end with a Google Penalty which in a simple word means trouble. Google is too smart, so don’t try to cheat.

What Is a Google Penalty and Why Is It Dangerous for Your Website?

A Google Penalty is represented by a negative impact on your rankings which comes to you after Google conducts a manual review or algorithm update. This means that you did something to your website that was against Google’s webmaster guidelines and your website was mentioned in a spam report. If you see that your rankings and traffic suddenly became lower in a matter of several days, especially after a new algorithm update, this usually means that a Google Penalty was applied according to new rules.

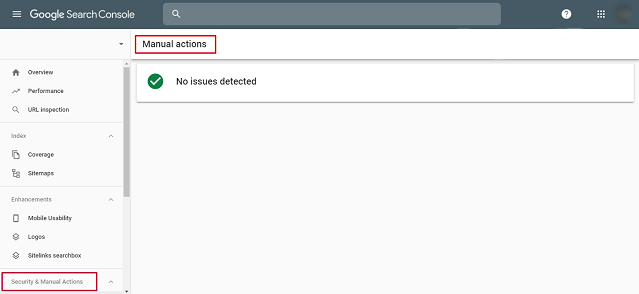

You can check whether your website was punished in Google Search Console. However, this shows you only manual review results. A penalty as a result of the latest algorithm update can be confirmed only via website ranking and traffic analysis.

There are two types of Google Penalty: partial matches and site wide matches. The first type of penalty is applied when there are several artificial links or those of low quality which point to specific pages of your website and you are losing valuable organic traffic. The second type takes place when the backlink profile of your entire website needs significant and instant auditing and cleaning.

A rank decrease may refer to all the pages of your website, a specific keyword, or a specific page.

What Stands Behind Google Algorithm Updates

A Google Algorithm Update covers a bunch of changes for Google’s ranking algorithm, including improvements in the existing algorithm or a set of new rules on how to analyze the quality of websites and then rank them in the SERPs.

Since Google launched in 1998, a lot of algorithm updates have happened. Of course, more often people pay attention only to the major ones, e.g. Panda, Penguin, Hummingbird, and Pigeon. However, there are actually more of them which have had a significant impact on the current SERPs view and which have been a total pain in the neck for many webmasters:

Google May Day Update

Release date: April 28 – May 3, 2010

This update aimed at rewarding websites with high quality long tail content. The peculiarity of the update is that Google now paid more attention to the quality of the presented content. It got you to higher positions on the SERPs regarding specific long tail keywords despite a poor backlink profile. Its main goal was relevancy of the content presented.

The greatest losses came to websites in a niche where they were selling goods generically. Focusing mostly on short tail keywords they went through significant traffic and rankings drops regarding long tail keywords.

Remedy: fill your website with enough long tail keywords. This may come in the form of articles, extended reviews, long descriptions, a characteristics overview, guidance, and so on.

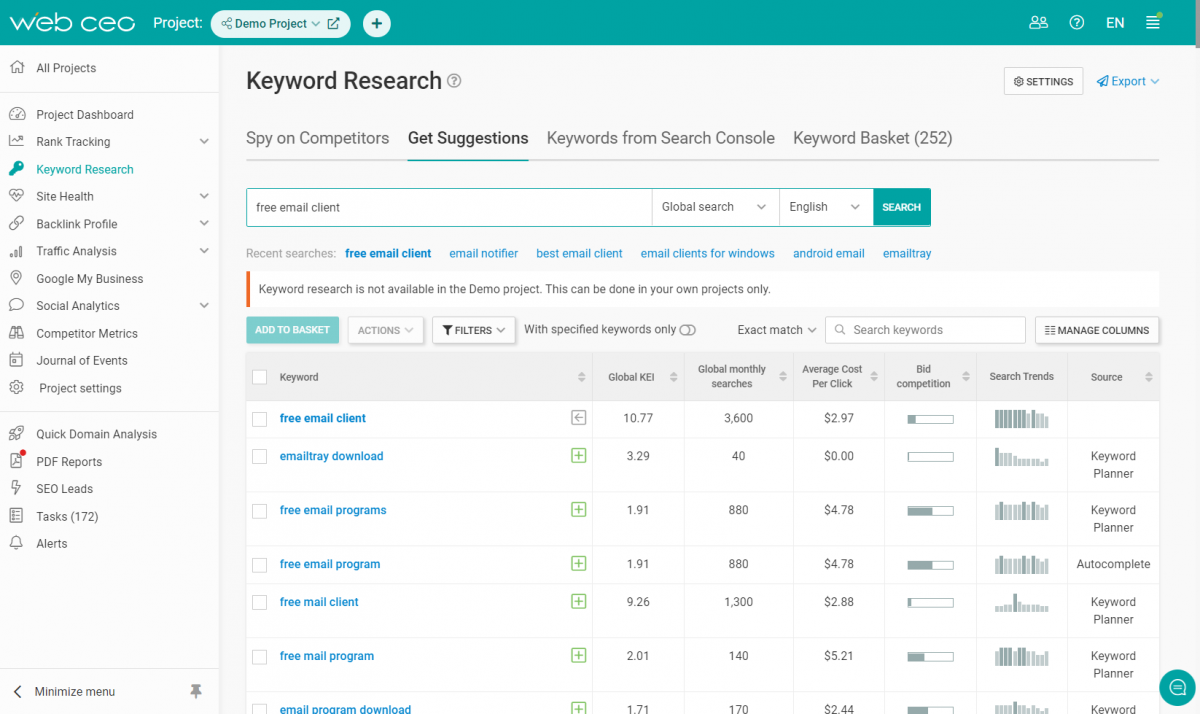

WebCEO’s Keywords Research Tool will help you to work on keywords and create unique and winning combinations for your website.

Google Panda Update

Release date: February 23, 2011

This update aims at rewarding websites with high quality content and punishing low quality websites. Panda looks for everything you did in order to get a higher position on a SERP without quality in your pocket. This could have been:

- Low quality content, whether it is employee or machine generated, will lead you nowhere. Google said multiple times that quality is everything and it is better to listen to these words if you want to be the first and keep your positions for a long time. Be sure to express the language of the text fluently.

- Unnatural language, in other words, this could be a keyword “overdose”. If you put too many keywords in your text, this will be noticed almost immediately, simply because neither we nor Google bots are used to reading content with a great repetition of specific words. Google also finds this suspicious and doesn’t delay penalties.

- Thin content or lack of content. This is when you have a really low amount of material on one of your website’s pages. Google likes it when you spend more time and present in-depth content to searchers. Writing a short paragraph with little sense, but an overdose of keywords, is not a good decision.

- Content farming, i.e. a method of creating a significant amount of low quality content, for example a bunch of very short articles written for popular search queries, with the aim of getting greater traffic and revenue. Google doesn’t like it when content is created with the aim of ad monetization. User experience and quality should always be first.

- Lack of authority/trustworthiness plays negatively with your rankings, you can see this by analyzing your website performance: how often your content is updated, domain age, type, and authority, poor or bad backlink profile, visitor behavior on your website, etc.

- Inappropriate ads. If there are a lot of advertisements on your website and those are not relevant to your content or disturbing for a website visitor, you can eventually expect a penalty from Panda. The situation may become especially risky if the amount of advertisements overtakes the amount of content (ad-to-content ratio).

Remedy: content improvement.

1. Work on the content of your website. Rewrite your material in order to make it of high quality, put keywords only in places where their presence is necessary and use only those keywords which are relevant to your niche and specifically to the page you are trying to improve. It will not be a bad decision if you remove all the pages with low quality content.

2. Forget about advertisements for a second. Go to websites that are trustworthy and popular and learn their advertisement profile: how many ads they have, whether they are disturbing, and their relevancy to a website’s niche. Then come back to your place and think about the same points regarding your website. Optimize things properly and create an ideal place for visitors.

3. No black-hat SEO. Honest and “clean”, well done content will bring you success and traffic, therefore heightening your authority. Searchers will come to your place and stay there for a long time. Google needs nothing more.

Google Exact Match Domain (EMD) Update

Release date: September, 2012

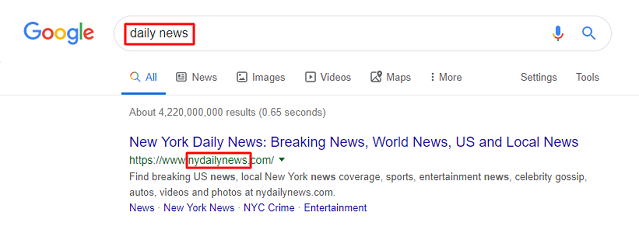

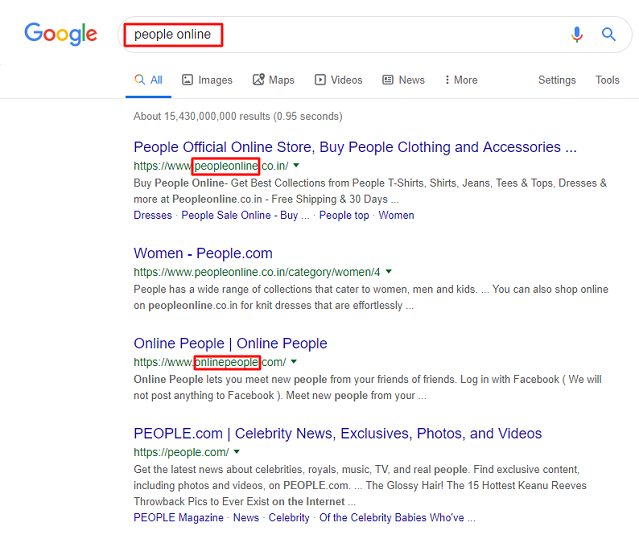

The Google Exact Match Domain (EMD) Update focused on websites with domain names that exactly repeated a searchers’ query and then got their websites to the top pretty much based on that alone.

Remedy: unfortunately, no advice will help here, because you either have such domain name or you don’t. It’s no longer a guaranty at all that you will score for a keyword just because your domain name is an exact match.

Google Penguin Update

Release date: April 24, 2012

Penguin was released to punish websites that try to improve their positions by getting links from low quality websites and by stuffing pages and anchor links with an enormous amount of keywords. Such schemes are easily recognizable even for a user. Google only needs to check websites from which those links came and then present you a penalty.

Nobody likes bad quality websites, because they, by default, mean that: a user will not find any good material there and can’t trust them, so it is better not to visit them at all. Accordingly, being linked to from such a website will reflect on you.

Remedy: forget about black-hat SEO.

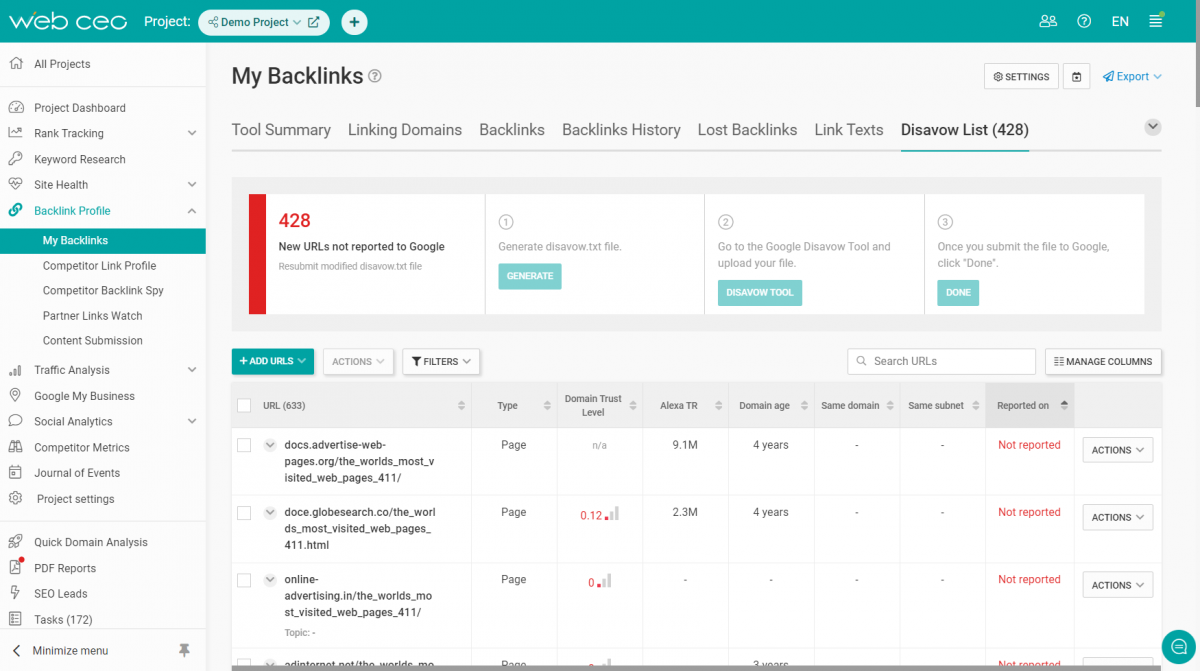

1. Don’t try to build fast, easy, or paid spammy backlinks, because those more often will bring you harm instead of success. Take your time and try to get links from websites which have already reached some popularity and high domain authority. WebCEO’s My Backlinks Tool will help you to learn your backlink profile from A to Z, including link texts, linking domains and the tool will show you toxic pages that link to your website.

Use white hat link building techniques like high quality guest blogging, link round-ups, the skyscraper technique, etc, which will be a win for both sides. By taking these steps, webmasters will gain a decent backlink profile which will be appreciated by Google, and build up domain authority for their website.

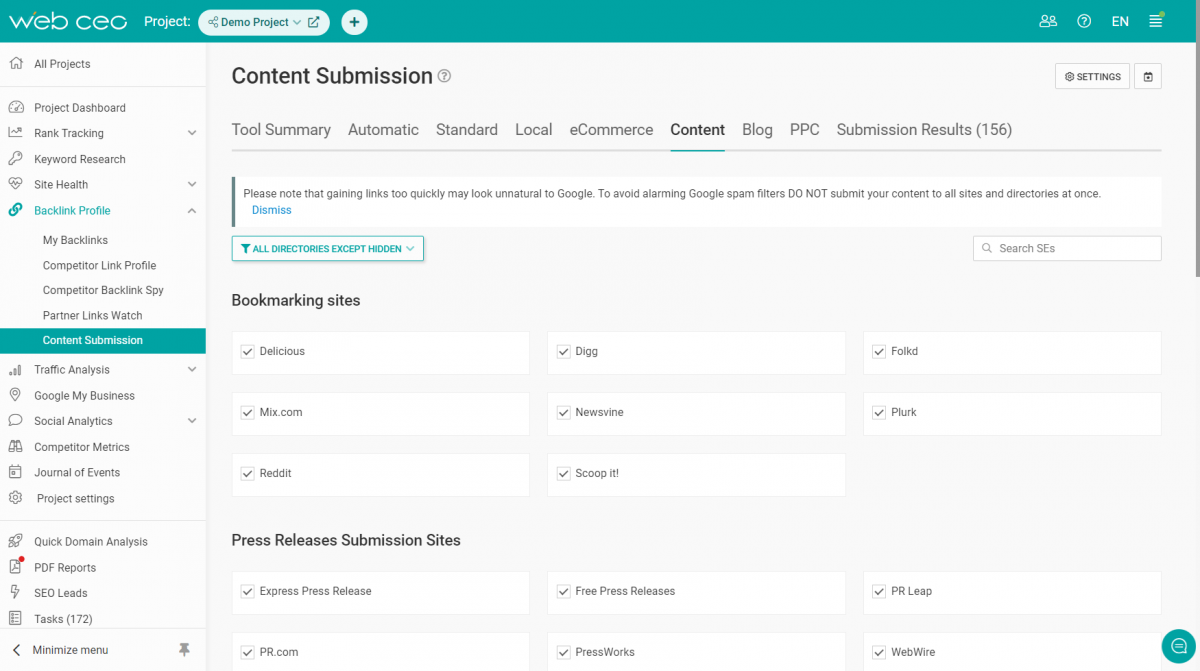

WebCEO’s Content Submission Tool will help you to find the best places where your content can be your best advertisement.

2. Avoid keyword stuffing in any form and place. Keywords are not flowers which you can put anywhere and enjoy them. Their main mission is to help you to find a reader, but not to attract Google’s attention. Find the best variants, build some relevant long tail or short tail keywords which are successful for your niche, find some synonymic alternatives and put them into your text so users and Google will not be annoyed. Google recognizes synonyms and can reward you even more for using them.

Google Hummingbird Update

Release date: August 20, 2013

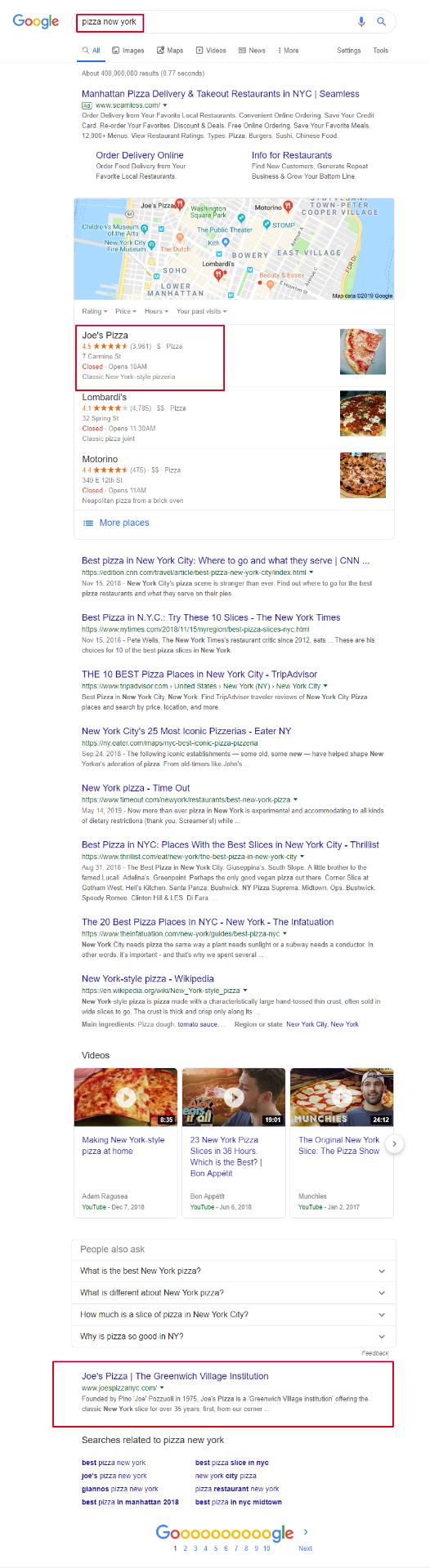

Because semantic search is so complicated, the Google Hummingbird update went farther than any other updates. It tried to understand a searcher’s way of thinking at the moment they wanted to find something on Google. This approach extended the borders of information which should be presented. For instance, Google would not just show the definition of “pizza” on its local SERPs, but information which concerns that word: recipes, history, the nearest pizza places, the most popular among them, recommendations in the form of “people also ask”, etc. Hummingbird tries to get you the most accurate results concerning your query, by analyzing what you probably need this information for.

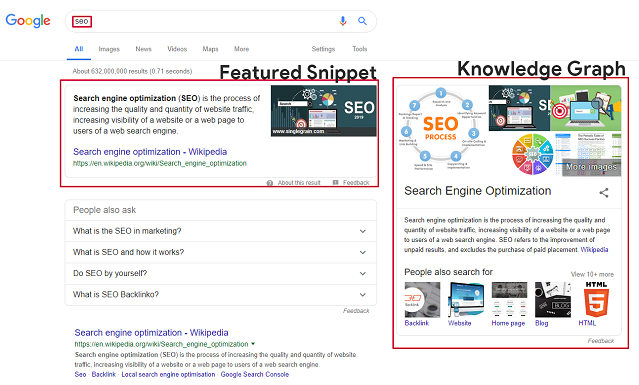

Hummingbird works with a “knowledge graph” which was first presented in 2012. There one can usually find relevant answers to a query, and you won’t even need to enter a website! With this update, local search was vastly improved. Since Hummingbird gives you less primitive information and goes deeper into your wishes, local businesses were given a large incentive to do better with their Internet performance by improving title tags, keywords in descriptions, and conducting website updates.

Advice: structure your content properly.

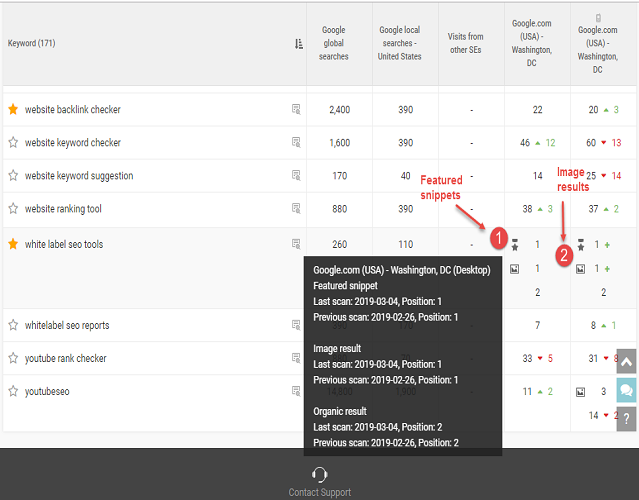

1. Because Hummingbird uses knowledge graphs, it has become better to write your content in a way that answers the following questions: Who? What? Where? When? Why? How? By doing this you heighten the chances of your website being chosen as an answer for a searcher’s query and you can also be selected for a Featured Snippet (technically speaking, we are suggesting that you optimize your Open Graph code and your Schema code – webmasters will know what we mean). This helps to bring more traffic.

WebCEO’s Rank Tracking Tool will show you your results in organic search and whether your website was shown in a Featured Snippet or Knowledge Panel (the box where Knowledge Graph data is presented).

2. Diversify your content. Long articles with comprehensive analysis are really great and Google likes them, but for a visitor’s convenience you can write paragraphs that are easy and quick to read. Moreover, these short articles can also be used by Google in a knowledge panel.

3. Your language should follow your niche. You can write in a simple, interesting, and engaging way, but don’t forget that you must create content related to a definite niche. Don’t make your article too easy; use up-to-date terms, statistics, diagrams, and so on. Remember that all those terms are your keywords, and Hummingbird can consider your information more relevant to somebody’s query than anything else.

4. Set a Schema markup. This markup determines whether your page will be featured in a Snippet. The data presented in your Schema markup can help visitors judge what your site represents: ratings, quantity of reviews and skillful descriptions. If you are an owner of a local business you can present more data concerning your working hours, menu, and phone numbers.

Google Pigeon Update

Release date: July 24, 2014

Pigeon brought a lot of changes to local SEO after its release:

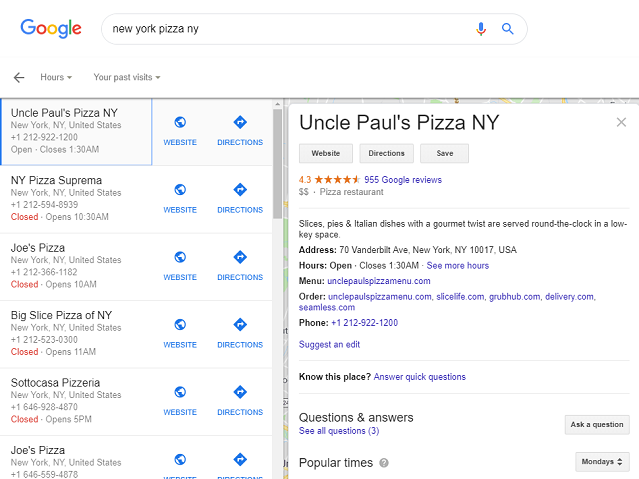

- With Pigeon, the 7-pack changed into the 3-pack: since the update, searchers can now see the three best results for local businesses instead of seven. You can also see a map on the SERP above the 3-pack which shows the distance to those three places;

- Pigeon shows you results not only depending on the closeness of venues to you, but also takes into account a website’s position for that keyword in organic search results. In simple words, Pigeon sees the nearest places to you, analyzes their organic positions on the SERP considering all SEO ranking factors, rates them, and then finally presents a list of the best variants for you in local search. This is a great feature because you receive not just the ordinary results of where you can go, but the best results;

Advice: become visible.

1. Make your business visible on all local business directories: Facebook, LinkedIn, Bing, Yelp, and many others. Local ranking factors have become more and more important: reviews, citations, links, social media engagement, and so on.

2. Use local search terms as your keywords. Write them down in a snippet of your own content, in title tags, and in the descriptions of your place in the local directories. Of course, don’t forget to mention them in your text.

3. Time to think about your website optimization. As Pigeon takes into account a website’s organic SERP results, you should take care of your website performance: high quality content, backlink profile, domain authority, mobile-friendliness, etc.

4. If you have a local brick and mortar business, go to the major travelling websites, e.g. TripAdvisor in order to gain some popularity, backlinks, and good reviews.

Google Mobile-Friendly Update

Release date: April 21, 2015

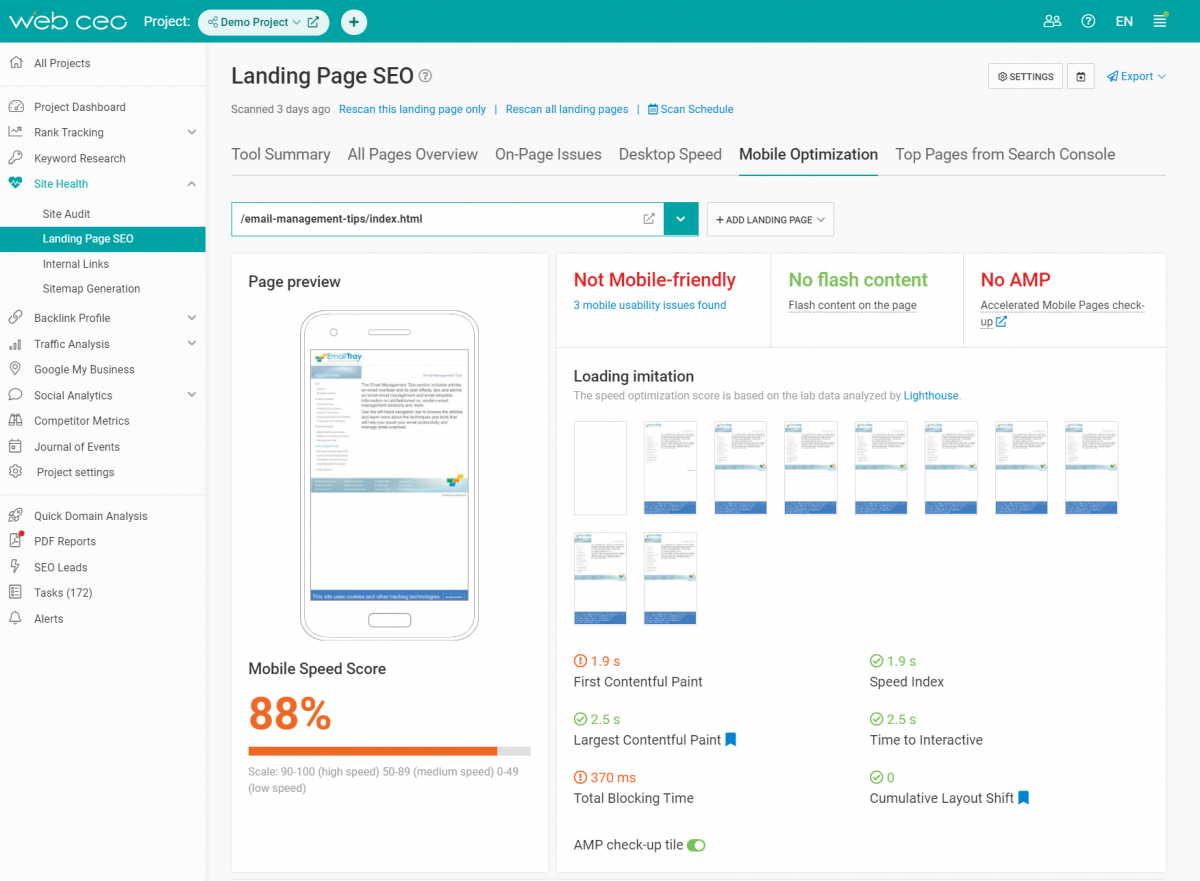

Mobile devices are everywhere nowadays. Users have traded their desktops for smartphones and prefer to chill out with them 24/7. Google sees trends and follows them. Trying to provide users with the best performance even on mobile devices, Google released its Mobile Friendly Update which had an impact only on those websites which aren’t optimized for smartphones. It has been a great motivator for webmasters to make their sites convenient for any type of device. Going into detail: with this update your search rankings on desktops are not lowered at all. As this update concerns only mobile devices, accordingly only your mobile rankings suffer from it if you haven’t optimized your website yet. However, it won’t necessarily affect the whole website. If some of your website’s pages are mobile-friendly, then they will not be “touched” by Google. Google has even provided a test for website owners which can help to check whether a website is mobile-friendly.

Advice: make your website mobile-friendly. Use AMP for this purpose, a framework that provides for the fast and smooth loading of your website on mobile devices.

WebCEO’s Landing Page SEO Tool will give you detailed information concerning your website’s mobile optimization, so you can discover any issues and see instructions on how to solve them.

With the Google Mobile-First Indexing which was enabled on July 1, 2019, a website’s mobile-friendliness obtains even more value. Google has begun to crawl and index websites primarily from the mobile version point of view. If you run a website that Google hasn’t seen yet and it is not mobile-friendly yet, be ready to experience problems with your indexing and rankings.

Google Possum Update

Release date: September 1, 2016

Possum was a greater version of Pigeon in which developers addressed the disadvantages of the latter:

- This update let companies situated beyond a city’s borders be shown in a local search when searchers specified that city. Earlier this was impossible, because Pigeon focused on places which were strictly on the territory of a chosen city. Even if a website had good positions in organic search results, it could not be seen in local results because of this.

- Google improved its results sorting. Now it doesn’t show several results which belong to one address. For example, if there are two coffee houses in one place near you, Google will show you only one of them in order to avoid duplicate content. The second result will also be presented on a list, but pushed down.

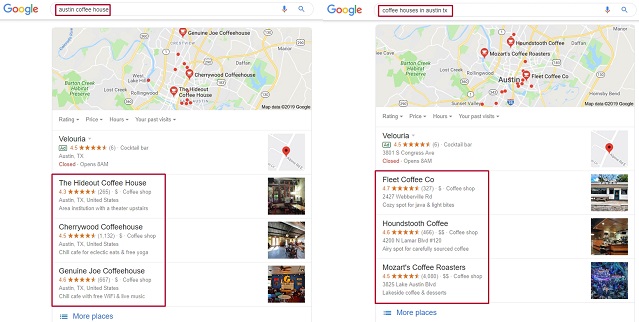

- Now you will see different results for keyword variations. Even a slight difference between them will give a list of new places, e.g.:

- Possum is more sensitive to a searcher’s physical location than it was before. Now the 3-pack shows you not just the best results for you, but also which of them are the closest.

- Local search has become more independent from the organic results. Even despite low rankings in organic search, some businesses do really well in local search results.

Remedy:

1. As Google still considers organic results while giving searchers the best local matches, it is important to constantly keep track of the general website’s performance: backlink profile, domain authority, etc.

2. Because of the keyword variation problem, you should do comprehensive research on the keywords you are ranked for. Maybe, there is a necessity to change them into new ones or add other variants.

Google Fred Update

Release date: March 7-8, 2017

The codename “Fred” is not official and was proposed by Gary Illyes on Twitter as being something that is unknown. The target of this update was to punish websites that use black-hat SEO and too many advertisements for aggressive monetization. “Fred” fights against websites:

- that contain an excessive amount of advertisements;

- the content of which is thin and of low quality;

- which contains text written about different topics with the aim of fast rank position gains;

- have little benefit for users, a lot of page issues and have a bad impact on the user experience;

- that are not mobile-friendly.

Remedy: website’s quality reevaluating.

1. Your website should belong to a specific niche and fulfill a user’s needs with relevant content, which is rich and well written, without keyword stuffing and without any signs of thin or duplicate content.

2. There should be no game playing with title tags, metadata, keywords, and schema code. Any attempts to use black-hat SEO must be stopped immediately. Google doesn’t like them and you should not either.

3. Be shy when time for advertisements on your website comes. An excessive amount of it will always disturb users and they will leave your site instantly, heightening your bounce rate, which Google automatically doesn’t like. Remember that a lot of people nowadays use Ad Blockers, so the chances to get something from those advertisements may become minimal.

Google Medic Update

Release date: August 1, 2018

The “Medic” update presumably punishes websites which can negatively influence people’s well-being. This includes: health, financial security, safety of a user, and so on. To be specific, this update affects websites which:

- require personal information, e.g. name, date of birth, Personal Identification Number, Social Security Number, bank account number, driver’s license, – on the whole, the type of information which may be used for Identity theft;

- offer goods for buying and use insecure monetary transactions, e.g. online shops, where the information about your credit card and bank account number is used and may be potentially stolen for the sake of lucre;

- offer a user some advice or general information regarding the medical sphere and health, which in Google management’s estimation may be harmful.

- present information in the form of advice regarding important life problems and future decisions, e.g. serious purchases like cars, houses, stocks, some financial advice and so on.

Remedy: raise trust among users.

1. Work on your landing pages and content – make it of high quality and erase all features which Google doesn’t like: low quality, thin, duplicate content, keyword stuffing, and everything else that Panda hunts for. Take the freshest information from trustworthy and official sources, attaching statistics, tables, diagrams, etc. With this you will show users and Google that you haven’t pulled your data out of thin air.

2. E.A.T. concept – expertise, authoritativeness, trust. Write in detail on your About page who you are, why you can be useful, and why people should trust you – prove that you are a specialist who has, for example, the necessary background and education to be an expert in a chosen niche. If you recommend some information that goes against the words of most scientists, then give your users more proof that what you say may be right (Copernicus was right after all). Trust rises with good reviews about your website, so ask people/your customers/visitors/subscribers to leave a comment concerning their attitude to your website.

3. Create an author bio which will present you as a specialist. This variant should be used if your About page gives information concerning the services you provide on your website. In your bio you can write about yourself as an expert in a specific sphere and why people can trust you. Maybe, you have a Bachelor’s, Master’s, or Doctoral Degree, completed some courses or internship, and so on. Google presumably also wants to trust you, so give it such an opportunity.

Google never stops developing and updating. And each time website owners encounter more and more new rules and limits, which they should obey in order to stay visible on the SERPs. 2019 follows this trend as well. The June Google Core Update made a lot of changes. Many YMYL websites were impacted and fell in the rankings. These are “Your Money or Your Life” sites that presume to sell you things that effect your health and financial situation. Meanwhile educational and informational resources gained more authority. Learn more about the June 2019 Google Core Update in order to protect your website from decreasing rankings and pick up new rules.

Google September 2019 Core Update

Release date: September 24, 2019

As the June 2019 Core Update’s successor, the September Core Update focused on websites that in any way might damage people’s well-being. Websites containing information regarding health, money, travel and medical stuff were the target of this update. Some publishing websites like The Daily Mail did better after this update after having fallen with the June update. Google says that any kind of information that might have any influence on people’s lives should be harmless. It’s still too early to describe everything this update might have brought, however, some points already cry for your attention.

Remedy: revise your content.

1. E.A.T. concept is still necessary to follow: show your visitors that you don’t get information out of thin air. Disclose your qualifications and prove your credibility so that neither Google nor users would hesitate to come to your website and later apply knowledge you provide them with.

2. Monitor your backlinks, both coming to and from you: the sources you use while creating your content might influence people’s trust in you as a professional and as a writer. Unproven and suspicious websites you are linking to will make people trust you less, because they will not be sure whether they can handle the data or not unless you provide them with recognized specialists’ opinions.

3. Freshness is an always winning feature: update your content with fresh and relevant information. If you use any statistics in your texts, make sure the figures are recent and a hundred percent true.

Google BERT Update

Release date: October 21, 2019

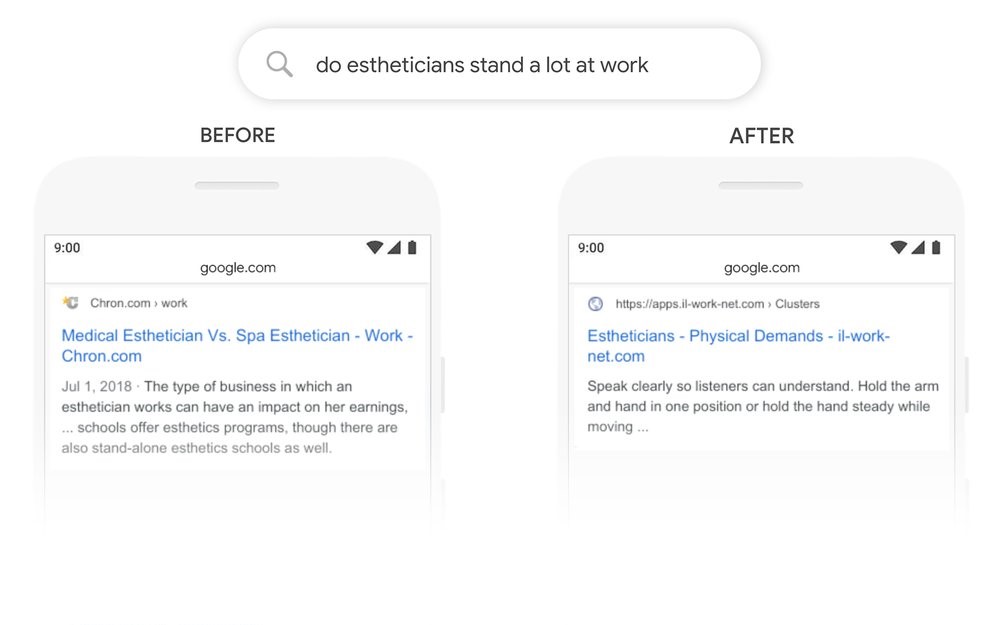

The BERT algorithm (Bidirectional Encoder Representations from Transformers) is not a simple update to the existing algorithm. It is the introduction of a new system that will help understand users’ natural language and provide them with more accurate results for their queries. We can call BERT Hummingbird’s successor at some point because both these updates are focused on the understanding of search intent.

BERT goes deeper in a searcher’s query analysis, catching the context of it. It considers the whole word groups, prepositions that surround the sense-leading word of a query and other language units. BERT will analyze the linguistics to understand what a person really wants and will deliver the most accurate results. This update will also influence featured snippets.

Google has provided examples of how the BERT algorithm works:

Remedy: there are no particular instructions on how to write content or optimize your website for this update. BERT was created to understand people’s way of thinking while creating a query from a linguistic point of view. The advice is to write for people in a natural way: no machine-generated content.

Google November 2019 Local Search Update

Release date: November 2019

As Google announced on their official Twitter account, neural matching will be used in delivering local search results. Neural matching is used “to better understand how words are related to concepts”. In simple words, neural matching was implemented to help the search engine build connections between the information about a local business and a searcher’s query and deliver more accurate results regarding particular businesses someone might be looking for even without specific names in a query. This might also concern similar location and business names and how to distinguish between these.

There is no remedy for this type of update. This concerns only Google’s ability to better understand what people might be looking for.

Google Link Attributes Update

Release date: September 10, 2019

Google introduced additional link attributes. Besides rel=”nofollow” there will be two more attributes to use:

- rel=”sponsored”: an attribute to a link to specify that it is a part of a sponsored promotion;

- rel=”ugc”: an attribute to a link to specify that it is a part of user generated content: comments or forum posts.

There is no remedy for this update, just a helpful feature for webmasters. Starting from March 2020, these attributes will be considered by Googlebots when crawling and indexing to understand a website’s content better.

Google Rich Results Update

Release date: September 16, 2019

Rich snippets for the “LocalBusiness” and “Organization” schema types (including their sub-types) have become the center of attention. Google decided to “eliminate” self-serving reviews for these categories. These are the reviews that are placed on a website’s pages with the help of widgets. You don’t have to switch off such widgets if you are using them currently, it’s just that Google will no longer show these on the SERPs. This update concerns organic search alone. If there are reviews about your website on other directories – that are not managed by you – such reviews will still be shown in SERPs.

Remedy: there is no exact remedy for such updates. Our advice is to work and wait for 5-star reviews about your business on other websites. The name property has also become important: when reviewing a product, it’s necessary to mention its name for the feedback to be more useful for users.

Google Snippet Update

Release date: October 2019

In June, France adopted a copyright reform according to which Google and other huge services have to pay media resources even for a tiny piece of content used on their platforms, for instance, an article’s abstract shown on the SERP. Due to these changes in EU legislation, Google introduced new robots meta tags that give webmasters an option to choose how their snippets will look like on the SERP. Currently, Google search results for some queries in France look like an ordinary list of websites without previews.

Remedy: this is not a problem to be solved or an update that can influence your rankings. This is a feature for webmasters to basically pre-approve or not whether Google can show a content snippet from your site in the SERPs. It is up to you whether to use these meta tags or not.

New robots meta tags from Google:

“max-snippet:[number]” for the quantity of characters;

“max-video-preview:[number]” for the length of a video-preview;

“max-image-preview:[setting]” for the size of an image-preview.

You are also free to mix these.

Google January 2020 Core Update

Release date: January 2020

Google continues to work on presenting the best content in organic search results. Authority and relevant content of high quality were again emphasized, especially for YMYL (Your Money Your Life) websites. Some of the websites of this type have experienced a rank drop, some have gotten to the top.

Featured Snippet deduplication is now a thing. A website that earned a featured snippet in the SERP will not be seen elsewhere on the first page anymore, at least not with the identical URL and anchor. This is because the featured snippet itself will be considered as the organic result.

Remedy: create high quality and relevant content that will answer people’s questions very well. Don’t dilute your text. Creating content that covers as many topics as possible is great. However, don’t try to shed light on everything in one shot. People don’t want to read three kilometers of text. They want accurate answers. Provide them with these, and they will look for other articles of yours.

To increase your authority, try to link to and get links from authoritative websites. A kind word and mention from a big and respected name will always matter.

Google May 2020 Core Update

Release date: May 2020

Relevancy is stressed again here. High quality has become a very broad metric. This may include great text, a cool and funny style, a lot of photos/videos, statistics and so on simultaneously. However, it can have zero value for a user if it doesn’t answer a query. Take into account the fact that Google highlights text on a webpage that is a clear answer to a user’s question to help them get what they wanted;

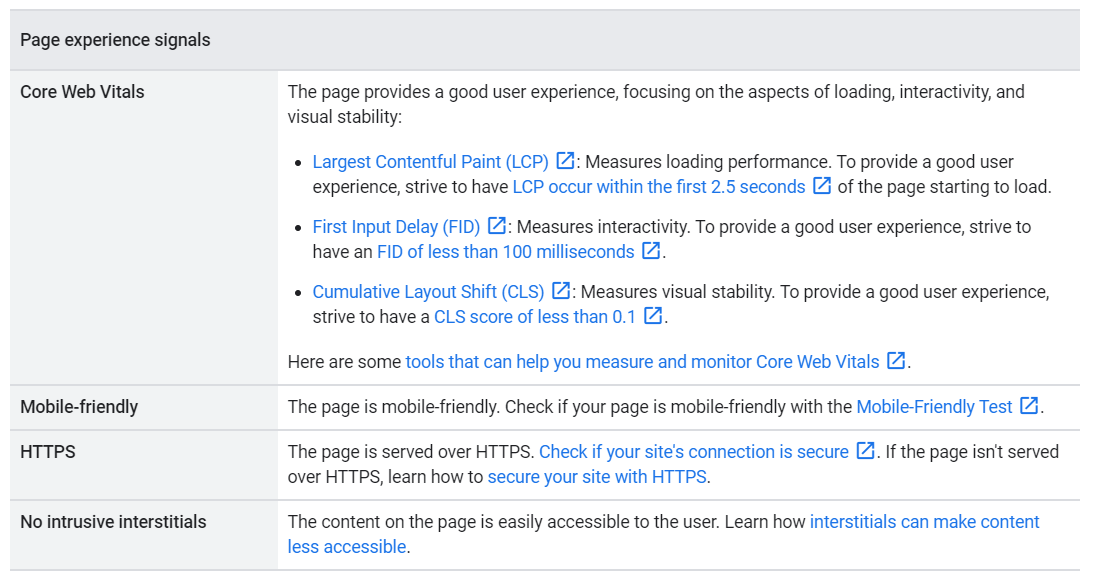

Core Web Vitals – Largest Contentful Paint, First Input Delay, Cumulative Layout Shift – all these are gaining more and more importance, and eventually may become official ranking factors. The User Experience is not a joke for Google. So it shouldn’t be for you. The way a user interacts with a website plays an important role in his or her further journey on it. Take some time to ensure the best possible experience in terms of content and website functionality.

The E-A-T concept (Expertise, Authoritativeness, Trustworthiness) hasn’t faded into oblivion. Your authority and professional expertise are key points on which people and Google decide on whether to trust your words or not. A lot of websites in the YMYL niche (Your Money or Your Life) have experienced a massive decrease in rankings. Smaller websites with no less great content went up;

Nofollow links that previously were ignored by Google have finally gotten a job. According to Google, from March, 2020 they use such links as hints for crawling and indexing.

Remedy: Provide users with relevant, clear and informative content that will give clear data for queries. Optimize UX, especially the mobile version of your website for comfortable learning and interaction with a website’s components. Prove your authority not only with diplomas, but via links, respective sources of information, and fresh content as well.

Google December 2020 Core Update

Release date: December 2021

The Google December 2020 Update was a loud event in the SEO world. This broad update showed no regard to specific countries, languages, or niches. The SERP’s fluctuations were a matter of concern during the rollout which started on December 3 and ended on December 16. What a Christmas present from Google!

There are no particular niches that suffered the most. This update focused on all industries according to reports from SEO agencies. People suggest that a matter of interest was E.A.T. and the quality of content. We shouldn’t forget that content has always been the most critical thing for Google and has already been touched upon in a line of previous updates.

Let’s set our eyes on the experience of Ignite Visibility with this update. According to John Lincoln, after the May 2020 Core Update, the company focused on putting their Core Web Vitals scores and old content in right. They refreshed their old content to make it up to date and accurate in data and figures. As a result, they experienced an increase in rankings after the December update.

Remedy: Google itself offers a universal cure. At that moment Core Web Vitals were gaining significance rapidly. Now we have it as one of the most important ranking factors. What you should do is check your Core Web Vitals index and optimize them to comply with Google’s requirements.

Google Passage Ranking Update

Release date: February 2021

This type of update doesn’t concern the SEO world in terms of optimization of specific aspects. It’s how Google understands the content. The Passage Ranking Update has opened a door to a better understanding of people’s needs. This is a globally focused update across all industries.

The short outline of the update is that Google will analyze content deeper by evaluating each passage on the page instead of getting the meaning of the whole page. This approach will help present people with the information they really need. The main change will be seen in the rankings as usual.

Now, if your content is not entirely relevant to a searcher’s query, but has a little part that directly answers a person’s question, this page will have a chance to get higher in the SERP for that question.

I suppose you have noticed the highlighted yellow text zones which Google addresses you to when you try to find some specific information? That’s it.

Remedy: the only way to prepare for such changes or comply with them is to work on your content: use only up-to-date data, properly structure text fragments and always consider relevance.

Google Product Reviews Update

Release date: April 2021

This update was not as big as the core ones. Its main focus was on reviewing systems, specifically their level of expertise. If you leave a short typical review it will not have much value. If your website mainly aims at reviewing different products, it’s high time to reconsider your writing strategy and start relying on the Google guidelines which will be presented below.

The retail industry has experienced the biggest losses in the rankings because reviews are one of the most important aspects of their prosperity.

Remedy: now, you have to pay more attention to the reviews you have on your website. Google offers a list of questions to answer when you build a review of a product:

- Express expert knowledge about products where appropriate?

- Show what the product is like physically, or how it is used, with unique content beyond what’s provided by the manufacturer?

- Provide quantitative measurements about how a product measures up in various categories of performance?

- Explain what sets a product apart from its competitors?

- Cover comparable products to consider, or explain which products might be best for certain uses or circumstances?

- Discuss the benefits and drawbacks of a particular product, based on research into it?

- Describe how a product has evolved from previous models or releases to provide improvements, address issues, or otherwise help users in making a purchase decision?

- Identify key decision-making factors for the product’s category and how the product performs in those areas? For example, a car review might determine that fuel economy, safety, and handling are key decision-making factors and rate performance in those areas.

- Describe key choices in how a product has been designed and their effect on the users beyond what the manufacturer says?

Google June and July 2021 Core Updates

Release date: June 2 – June 12 & July 1 – July 12

This update was the global one. Its peculiarity was its two-stage system. The June update was the first one in a series, and the second one was in July.

It’s important to mention that the June update was brighter than its successor.

According to Search Engine Land, YMYL websites (finance, law, health, etc) have come to harm more than others. Industries that touch people’s well-being have experienced a lot of fluctuations over the years.

SEMrush stated that the Food & Drink, Law & Government, and Internet & Telecom sectors have won the most during this update. Whereas YMYL websites (Jobs & Education, Business & Industrial) have gone through hardships.

However, we can’t say for sure, because many websites haven’t gone through a lot of changes in their rankings.

The June update was a long-awaited event because Core Web Vitals has become an official Google ranking factor.

The July update was the second part of the June Core Update, and webmasters have said, this update was not as tough as the previous one.

According to SEMrush, niches that have experienced the biggest changes during this period are Real Estate, Shopping, Beauty & Fitness, Science, and Pets & Animals. Whereas Finance, Arts & Entertainment, Games, Sports Jobs & Education, Food & Drink, and News were less damaged.

Remedy:

- brush up on your Core Web Vitals and optimize your website to properly comply with new requirements,

- if your website belongs to the YMYL category of websites, work on your content to prove that it’s reliable and furnish more evidence of your high level of expertise in the niche.

Google Spam Updates

Release dates: June 23 & June 28, 2021

Google has been fighting spam since the beginning of time. These particular updates were dedicated to it as well. If your website felt changes in its rankings during these two days, it’s a strong signal for you to check your website inside out and find holes in your anti-spam protection.

The Spam update was also a global one.

Remedy: eliminate any signs of spam from your website.

Google Page Experience Update

Release dates: June & August 2021

This update concerns the conditions people experience on a website: the better the experience the better your rankings.

It will be fully rolled out by the end of August. The name of the update tells us clearly about its main goal: to stand out with a great user experience against a background of those websites that don’t meet the requirements.

The following signals are important for delivering a good page experience in Google Search:

Changes will be applied to the News section in the SERPs as well: the AMP format will no longer be required for a website to appear in the Top Stories Carousel section until other conditions are met. Also, the Core Web Vitals index and the page experience status will not prohibit news from appearing in this section.

To help webmasters understand how things work, the Pages Experience report is presented.

Remedy: optimize your website for the points mentioned in the table or at least check whether you have any problems with them.

TO SUM UP, year by year, Google tries to improve the experiences of its users, releasing updates that have the potential to make each search for information more easy and satisfying (you may disagree if your site dropped in the rankings but you can work with us to bring them back up). The updates we’ve mentioned in this article had a huge impact on the SERPs. There have actually been many more updates than the ones we mentioned, plenty of which were never mentioned to the public. Maintain your rankings with WebCEO’s Rank Tracking Tool and protect your website from further Google algorithm updates.